For most organizations, data and analytics remains a cost center—a massive investment in lakes and warehouses that hasn’t yet paid its way. Businesses have hired brilliant analysts. Yet, for the average employee, data remains a friction-filled resource.

When a sales leader needs to know why revenue is dipping, they shouldn’t have to log a ticket and hope the analyst’s definition of “revenue” matches the CRM. That is a cost. It burns time, it burns money, and it burns momentum.

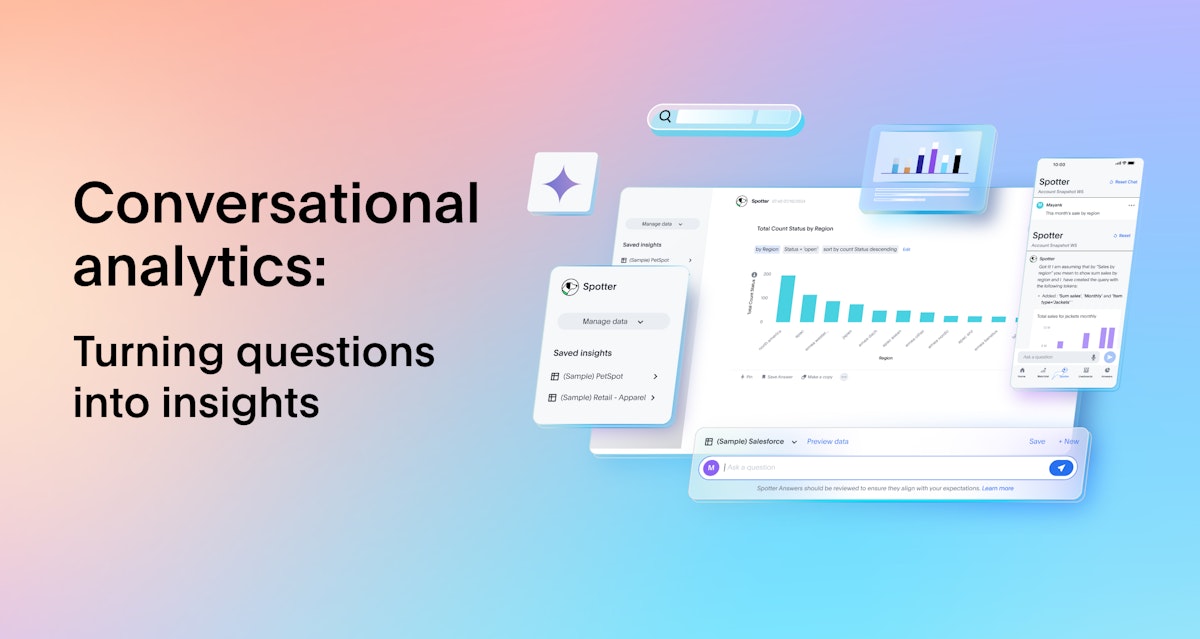

The promise of conversational analytics—and the agentic AI revolution driving it—is to flip that dynamic on its head. It promises to turn your data into a profit center by lowering the barrier to entry so drastically that anyone can ask questions, get trusted answers, and take action immediately.

The appetite for this is massive. In fact, our State of Data and Analytics Report found that 94% of business leaders say they would perform better if they had direct data access in the programs and apps where they work the most.

Here is where things get messy: As leaders rush to adopt this technology, many confuse a simple interface with a complete solution. They see a chatbot that can answer “How many widgets did we sell?” and think they’ve solved the problem.

But they haven’t. They’ve just seen the tip of the iceberg. And if you don’t understand what lies beneath, you aren’t building a profit center—you’re building a liability.

The commodity trap: Why NLQ is just the tip of the iceberg

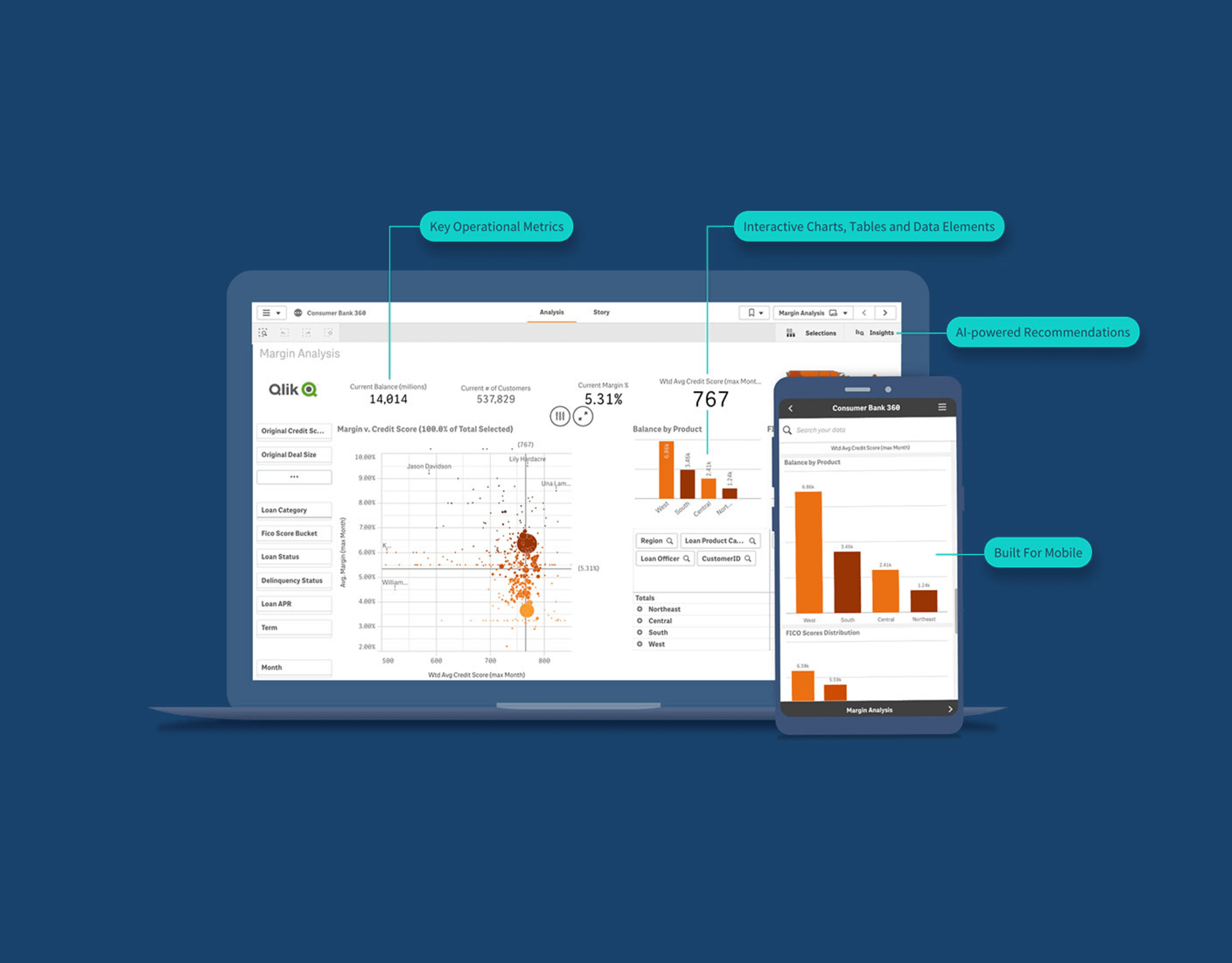

What most people see today is natural language querying (NLQ). This is the ability to ask a factual question like, “How many customers are in California?” and get a direct answer or visualization based on a translation to a SQL query.

Generative AI has advanced so quickly, basic NLQ has become a commodity. You can upload a CSV to a public LLM or have a developer hack together a “chat with data” tool in a weekend Hackathon. It looks impressive on the surface.

But here is the reality check: NLQ is just the tip of the iceberg.

While NLQ handles the “what,” it often fails at the “why” and “what next.” It struggles with ambiguity and lacks business context. The risk is real—89% of data and analytics leaders say they’ve experienced inaccurate or misleading outputs with AI. And if an agent hallucinates a margin calculation because it doesn’t understand your business logic, your team makes bad decisions faster.

A chatbot that guesses is a toy. A system that knows is a business tool. To move from the former to the latter, you need the massive infrastructure submerged beneath the surface.